Blog

Engineering stories, security deep-dives, and lessons from shipping AI-powered test automation.

We Redesigned 14 Landing Pages — Through Our Own AI Review Gates

22 commits, 3 PRs, a 5-persona AI review, every commit gated by SAST + prompt-injection + brute-force checks. If we don’t trust our pipeline with our own brand pages, why would you?

Read more →Teaching the Reviewer: How 👍/👎 on a PR Comment Rewires the Next Review

A single click on a QualityMax PR comment becomes durable, per-repo knowledge the reviewer retrieves on the next PR. Here’s the plumbing — three feedback channels, one storage layer, and the GitHub-webhook limitation that forced us to build a poller.

Read more →Two Posts, Same Day: The Gap Between AI Policy and Vibe Coding

One mature engineering org writes a 27-page AI policy with the rule “if you can’t explain the code, don’t commit it.” One workshop ships 10 live websites in an afternoon with Lovable and Cursor. The gap between them is the whole QualityMax market.

Read more →The Möbius Strip QA Loop: When the Tool Tests Itself

Most QA tools sit outside the code they test. QualityMax sits inside — and now monitors its own errors, generates its own regression tests, closes its own loops. A single-sided surface where tool and target merge.

Read more → Product Update

Product Update

Your AI Reviewer Now Asks What You Care About

Interactive calibration for AI code reviews: pick which categories to check, which to skip, and get structured findings with a one-command fix for your LLM agent.

Read more → Engineering

Engineering

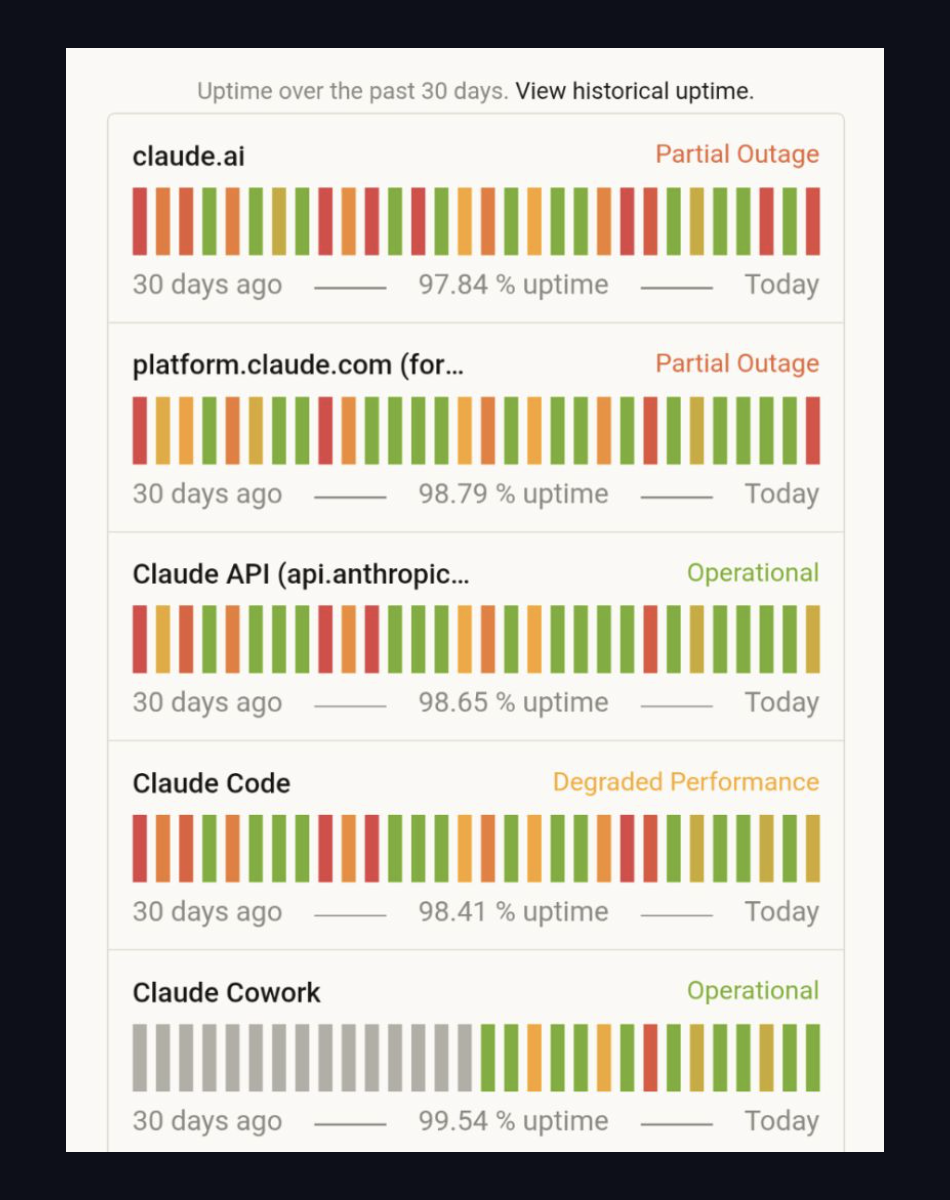

When Claude Goes Down, Your Tests Shouldn't

Today's Anthropic outage took claude.ai partial, Claude Code degraded. Every AI test platform built on a single LLM provider went down with it. Here's why QualityMax routes per-task across Claude, GPT, and Gemini — and what that costs.

Read more → Engineering

Engineering

Building qmax-code: Why We Built Our Own AI Testing Agent

7,951 lines of Go. Charm framework TUI. 48 MCP tools. Not based on Claude Code. Two tools, one mission — here's the engineering story.

Read more → Analysis

Analysis

AI Coding Agents Are Secured in the Wrong Direction

The Claude Code source leak reveals an industry-wide gap: AI tools invest in containing the agent but barely verify whether the code it produces is secure. 4% of GitHub commits are now AI-generated. Who's checking them?

Read more → Real Incident

Real Incident

We Got Brute-Forced on Launch Day

We posted our vibe-check page on Reddit and Hacker News. 1,145 users came. So did a brute-force attack that blew through our Resend email quota in 4 minutes.

Read more → Engineering

Engineering

Building the Matrix Demo

Behind the scenes of our interactive demo page — boot sequences, the red pill / blue pill choice, a chat-driven AI terminal, and live Playwright execution in the browser.

Read more → Security

Security

Building a Hostile Site to Test Our AI

How we created an adversary website full of prompt injections, XSS traps, and redirect loops to stress-test our AI crawl pipeline — and what we learned.

Read more →